Prompts

01 Jan 2017When prompting a user for a particular response, it would be useful to be able

to parse and validate that response. For example, when expecting a time input,

which may be received in a natural language format such as “yesterday” or

“after noon next Tuesday”, have the system parse the response to extract

structured data such as :value "2013-02-12T15:00:00.000-02:00", :grain :hour.

This requires that user responses be processed in stages - as a pipeline.

To implement response processing pipelines, Cooee considered three options:

- Stacks

- Using the Receive Pipeline Pattern within Akka

- Akka Streams

The second option was chosen.

Stacks

The Microsoft Bot Framework has an elegant approach, using a stack, which is used more generally by all ‘dialogs’ - the Microsoft framework’s term for a conversation flow specification. A ‘prompt’ is a special form of dialog. Multiple dialogs for a user interaction can be pushed onto a stack. A response is processed by the dialog popped off the top of the stack (FIFO order). If there is another dialog in the stack, then the result of the current dialog is passed to the next one ready to be popped. If there are no more dialogs, then the result is sent back to the user.

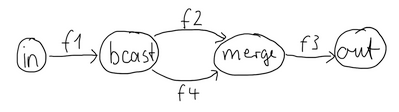

Akka Streams

The Akka Streams API offers a graph DSL, which can implement a staged pipeline for processing event streams. This option may be reconsidered if there is a requirement to implement a pipeline with junctions (fan-out). It is possible to build reusable, encapsulated components of arbitrary input and output ports using the graph DSL.

Receive Pipeline Pattern

The Receive Pipeline Pattern lets us define general interceptors for our messages, and plug an arbitrary amount of them into our Actors. It’s useful for defining cross aspects to be applied to all or many of our Actors.

Multiple interceptors can be added to actors that ‘mixin’ the ReceivePipeline

trait. These interceptors internally define layers of decorators around the

actor’s behavior. The first interceptor defines an outer decorator which

delegates to a decorator corresponding to the second interceptor and so on,

until the last interceptor, which defines a decorator for the actor’s Receive

function.

For each message received by an interceptor, the interceptor will typically perform some processing based on the message and decide whether or not to pass the received message (or some other message) to the next interceptor.

A PromptInterceptor can be ‘mixin’ed to’ a conversation actor to preprocess

response messages, in this case to parse and validate an expected response

defined by the ‘prompt’ set when the message was sent.

Prompt Types

The Cooee framework supports the following types of prompts:

- time, e.g. “today” or “Monday, Feb 18” - both points in time and intervals

- temperature, e.g. “65 degrees”

- number, e.g. “100K” or “four”

- ordinal, e.g. “first”

- distance, e.g. “7 km” or “2 inches” and conversion to a normalized unit (metre)

- volume, e.g. “250ml” or “1 gallon” and conversion to a normalized unit (litre)

- money, e.g. “ten dollars” or “$20.30”

- duration, e.g. “2 hours” or “3 min” and conversion to a normalized unit (seconds)

- URL

- phone number, e.g. “415-123-3444” or “(650)-283-4757 ext 897”

- selection from a defined list, either by ordinal number of the item’s index in the list, or nearest textual match (using Levenshtein distance)

The PromptInterceptor will add the parsed structured data as additional

context to the message, which can be used by the conversation logic.

case class TextResponse(platform: Platform,

sender: String,

text: String,

context: Option[Map[String, Any]] = None)

The PromptInterceptor uses the excellent Duckling

library.